News

Jessica Brown

Apr 15, 2017

Pictures:

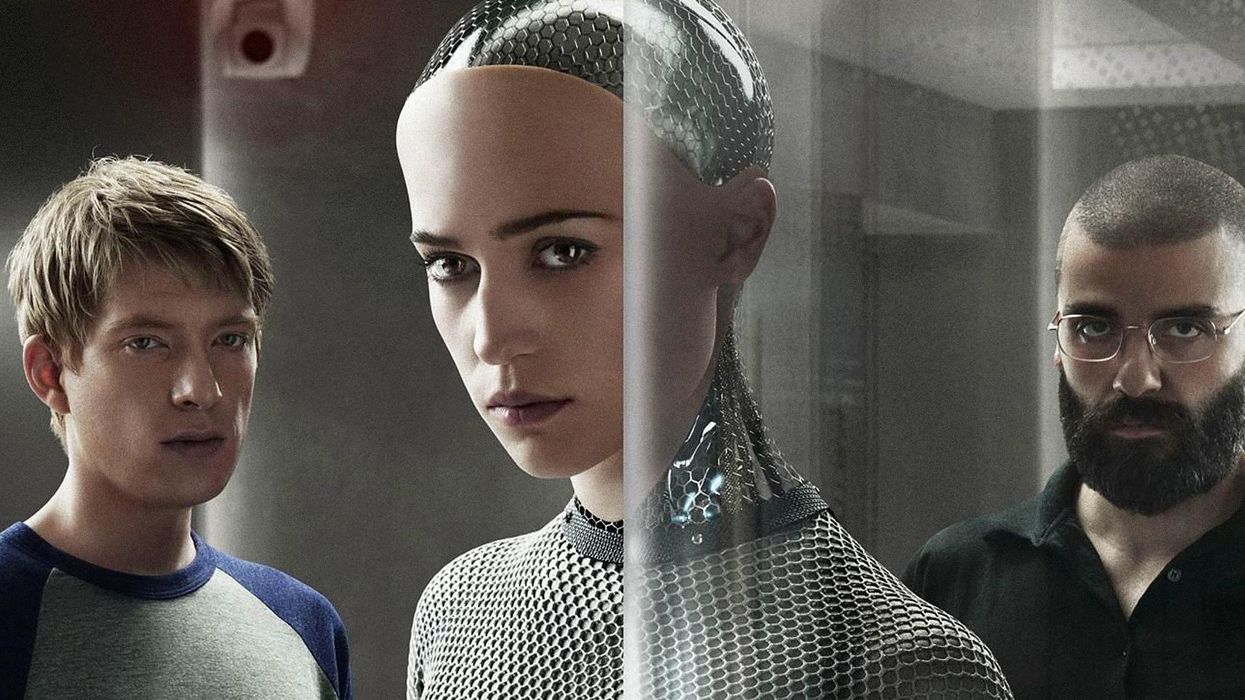

Film 4 / DNA

One thing that’s certain in our increasingly automated future is that artificial intelligence will play a increasingly central role in our lives.

But while we think of these machines as robotic and logical, they can actually acquire cultural biases around race and gender.

Researchers from the University of Washington found that a machine learning tool called “word embedding” can absorb biases hidden in language patterns.

This tool helps computers make sense of language, and is used in web search and language translation, using a statistical approach to build up a mathematical representation of language and the meaning of words.

The study, published in the journal Science, involved the machine pairing word concepts together.

The researchers found that the words “woman” and “female,” for instance, were more associated with arts and humanities jobs and words relating to the family, whereas “man” and “male” were more closely linked with maths and engineering, and words relating to careers.

And it wasn’t just gender. The researchers found that the system associated European American names with more pleasant words, and African American names with unpleasant words.

But it's not the machine's fault - it's ours.

Joanna Bryson, a computer scientist at the University of Bath and a co-author, said:

A lot of people are saying this is showing that AI is prejudiced. No. This is showing we’re prejudiced and that AI is learning it.

They learn it from us.

Top 100

The Conversation (0)