News

Greg Evans

Apr 08, 2018

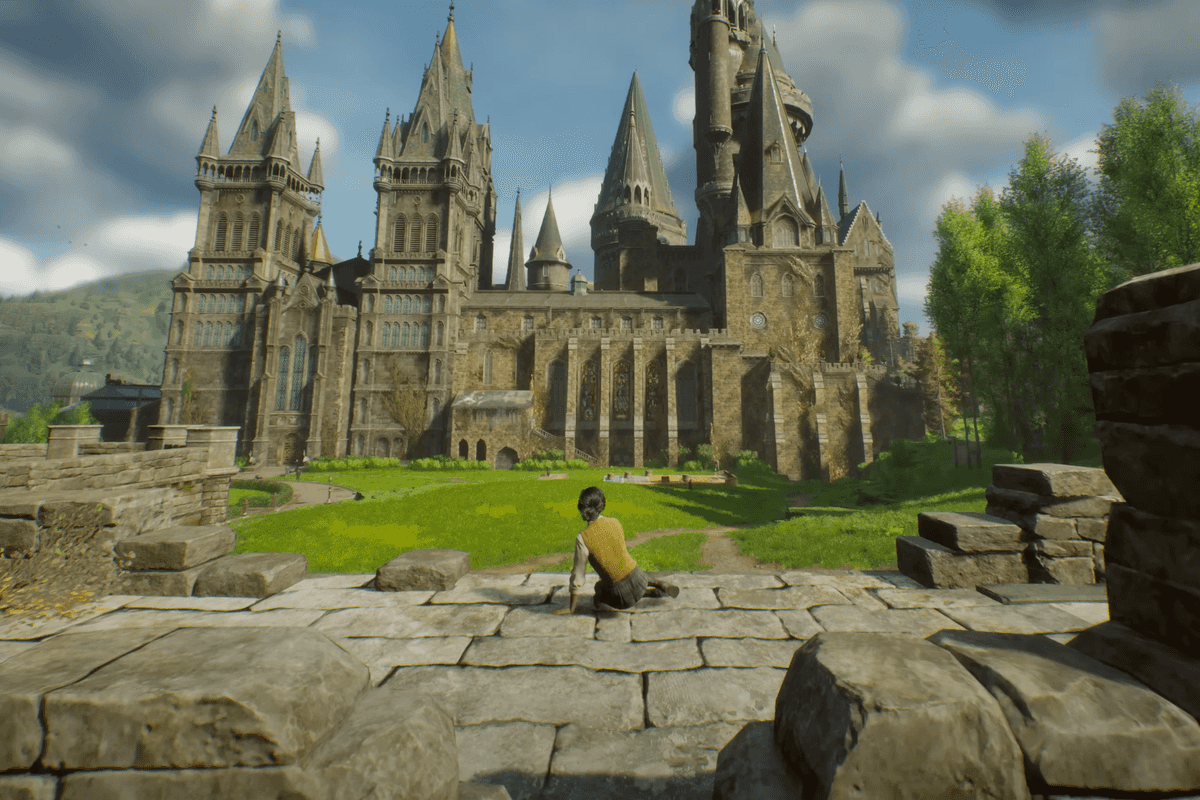

Picture:

Moviestore/REX/Shutterstock

It's safe to say that artificial intelligence isn't having the greatest of times at the moment.

Earlier this week Elon Musk warned that AI could become an "immortal dictator" while a supercomputer has recently started composing it's own incredibly creepy poetry.

Now experts in AI are also becoming sceptical about its use as a group of experts have chosen to boycott a university in South Korea who has gone into partnership with a weapons manufacturer.

The Korea Advanced Institue of Science and Technology (KAIST) has received a letter of concern signed by more than 50 AI experts after announcing that they would be opening a joint research centre with Hanwha Systems.

Hanwa has already developed robotic sentry guns that patrol the border between South and North Korea and, according to The Verge, have built cluster munitions banned by many major nations.

The defence company want to "develop artificial intelligence (AI) technologies to be applied to military weapons" that could operate without human control, according to The Korean Times

Yeah, this doesn't sound like The Terminator movies at all...does it?

The opposition to the university, which is renowned for its research in robotics, was organised by Professor Toby Walsh of the University of New South Wales.

Experts from 30 countries also added their signature to Walsh's boycott, who said in a press statement:

We can see prototypes of autonomous weapons under development today by many nations including the US, China, Russia, and the UK.

We are locked into an arms race that no one wants to happen. KAIST’s actions will only accelerate this arms race. We cannot tolerate this.

In an attempt to reassure us that Judgement Day isn't just around the corner, KAIST president Shin Sung-chul said that the new facility would not be developing any weapons.

The BBC quotes him as saying:

I reaffirm once again that Kaist will not conduct any research activities counter to human dignity including autonomous weapons lacking meaningful human control.

Kaist is significantly aware of ethical concerns in the application of all technologies including artificial intelligence.

In addition, he explained that the university would be focused on developing algorithms for "efficient logistical systems, unmanned navigation and aviation training systems."

Even if KAIST are true to their word and aren't developing AI weapons, the UN is still planning to address the subject in Geneva next week.

123 member nations will discuss the dangers posed by killer robots and lethal autonomous weapons. Countries like Argentina, Pakistan and Egypt have already backed a ban.

Walsh feels that the problem should be dealt with soon before it becomes irreversible:

If developed, autonomous weapons will [...] permit war to be fought faster and at a scale greater than ever before.

This Pandora’s box will be hard to close if it is opened.

HT BBC

More: Robots are now cracking puzzles that only humans should be able to solve

Top 100

The Conversation (0)