Joe Vesey-Byrne

Sep 03, 2017

Picture:

iStock/Getty Images

Some of the world's smartest people described what they consider to be the greatest threats to humanity.

A survey for Times Higher Education of 50 Nobel Prize winners has found ten common predictions for the end of human kind.

The laureates represent a quarter of the living winners of the chemistry, physics, physiology, medicine, and economics prizes.

(Sorry Bob)

The results were as follows:

1. Over a third believe population rise and environmental degradation will end life as we know it.

18 laureates (34 per cent) named over population or environment disaster (mostly to do with climate change) to be the biggest threat.

THE quotes on laureate as stating:

Climate change [and providing] sufficient food and fresh water for the growing global population…are serious problems facing humankind.

Science is needed to address these problems and also to educate the public to create the political will to solve these problems.

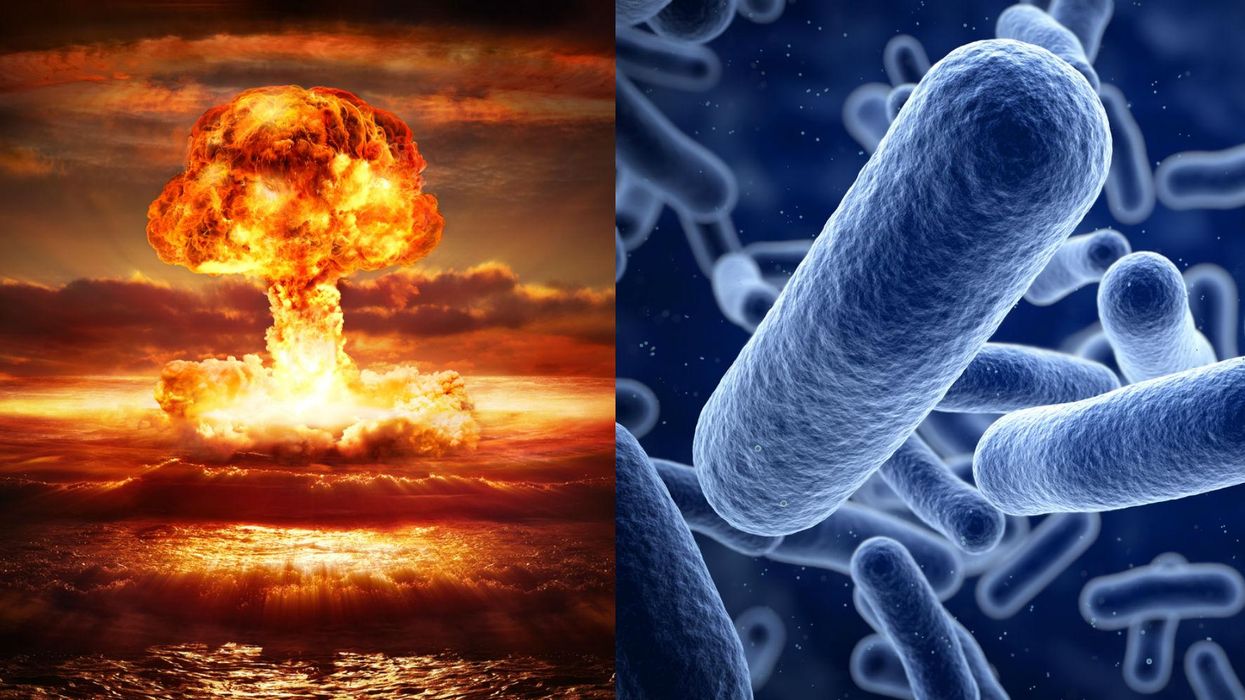

2. Nuclear war - 23 per cent, 12 laureates

North Korea is now believed to have made its sixth test of a missile capable of carrying a nuclear warhead.

These heightened tensions between the rogue state and the international community may be responsible for pushing the outbreak of nuclear war high up the list.

Respondents cited 'warmonger dictators' and 'populist regimes in possession of nuclear wepons;

3. Infectious disease or drug resistance - 8 per cent, 4 laureates

Four laureates cited either a new disease, or a new strain of existing diseases which are resistant to existing drugs and medication.

According to the World Health Organisation, anti-biotic resistance is one of the biggest threats to 'global health, food security, and development today'.

4. Selfishness, and a loss of honest - 8 per cent, 4 laureates

Tied in third place with drug resistance, is the threat of individuals to humankind as a whole.

Laureates said they feared a loss of 'humanist perspective as we rush into the age of the internet and its seductions'.

5. Ignorant leaders - 6 per cent, 3 laureates

One wonders if these three laureates had anyone in particular in mind?

6. Fundamentalism or terrorism 6 per cent, 3 laureates

Religious extremism and fear that terrorists get a hold of weapons of mass destruction was another concern among laureates.

7. Ignorance and distortion of the truth - 6 per cent, 3 laureates

Being scientists, its unsurprising that some described the distortion of truth a considerable threat to humankind.

In a world where scientific results are thrown into doubt, or dismissed a 'fake news', how can science hope to prevent any kind of disaster?

8. Artificial intelligence - 4 per cent, 2 laureates

This is possibly Elon Musk's favourite refrain, but fear of computers surpassing human intelligence and overthrowing humankind has existed more or less since the first computers.

9. Inequality - 4 per cent, 2 laureates

Linking many of these is the rise of populism and political polarisation.

Resurgent climate scepticism, and a war of political rhetoric regarding nuclear weapons, would explain the top two threats to humankind.

10. Drugs - 2 per cent, 1 laureate

Fear of a new powerfully addictive drug that destroys society, was the main threat in the opinion of one laureate.

The same survey found that 40 per cent of laureates believe that populism and political polarisation is a grave threat to scientific progress. Only 5 per cent believed it to be 'no threat'.

According to THE two respondents specifically mentioned President Trump during the list of threats.

Despite the doom and gloom posed in the question, many of the laureates were optimistic about mankind, and felt that an apocalypse was unlikely.

One told the THE:

The human species is so successful in making the world a better place.

The same laureate said that the solution to most of these threats is colonisation of space.

The ultimate insurance policy is to make humanity a multiplanet species.

And science obviously has a big role to play in that

More: A British Nobel Prize winner actually said this about female scientists

Top 100

The Conversation (0)