Science & Tech

Louis Dor

Oct 17, 2015

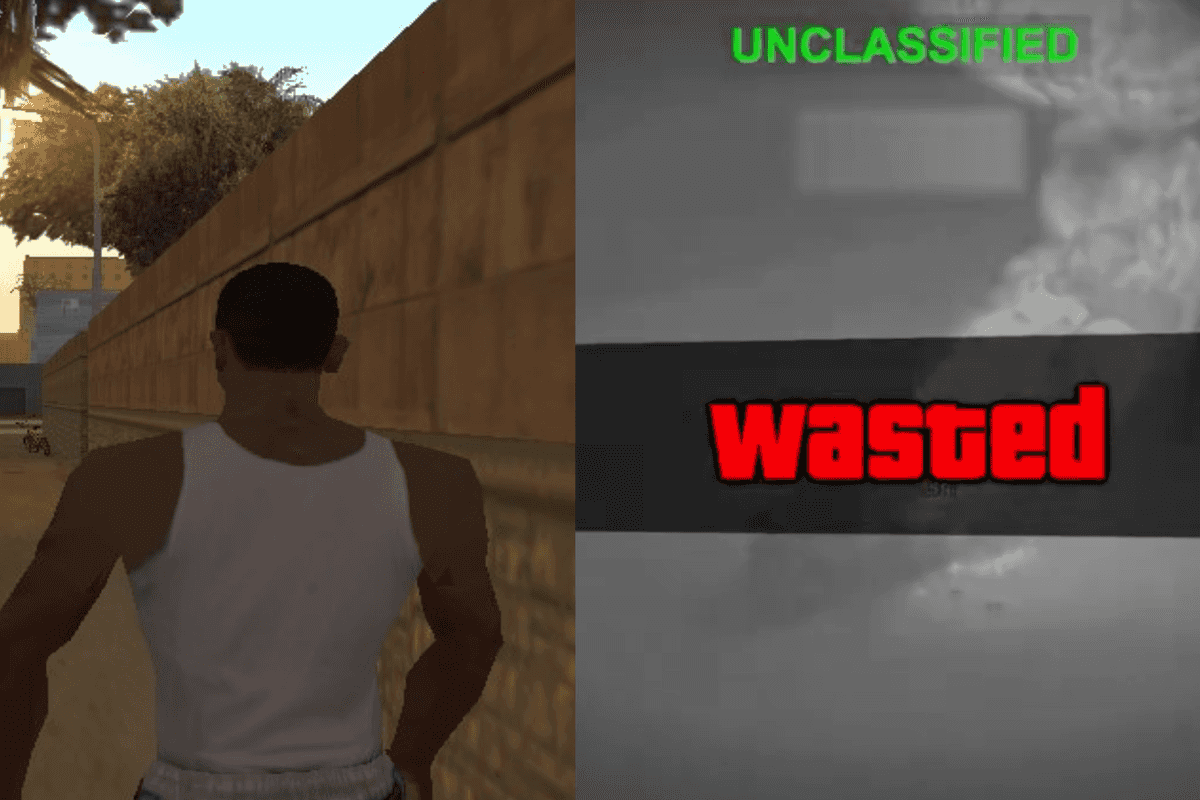

A study at the University of Stanford has created a system to map expressions from one person onto the video feed of another person in real time. It’s the creepiest thing we’ve ever seen.

The system could be applied to conference calls, to have the mouth of a translator supplanted onto the live feed of the person making the call.

The corresponding paper has described how the system works by taking into account facial details, expression, skin tone and lighting for every frame, before manipulating the corresponding feed in a photorealistic manner.

The study concluded:

Overall, we believe that the real-time capability of our method paves the way for many new applications in the context of virtual reality and tele-conferencing.

We also believe that our method opens up new possibilities for future research directions; for instance, instead of tracking a source actor with an RGB-D camera, the target video could be manipulated based on audio input.

What a remarkable piece of technology.

SHUT IT DOWN

To watch the full video, see below:

More: Is this clever robot proof machines will inherit the earth?

More: AirWheel has come up with a totally ingenious way of getting around the hoverboard ban

Top 100

The Conversation (0)